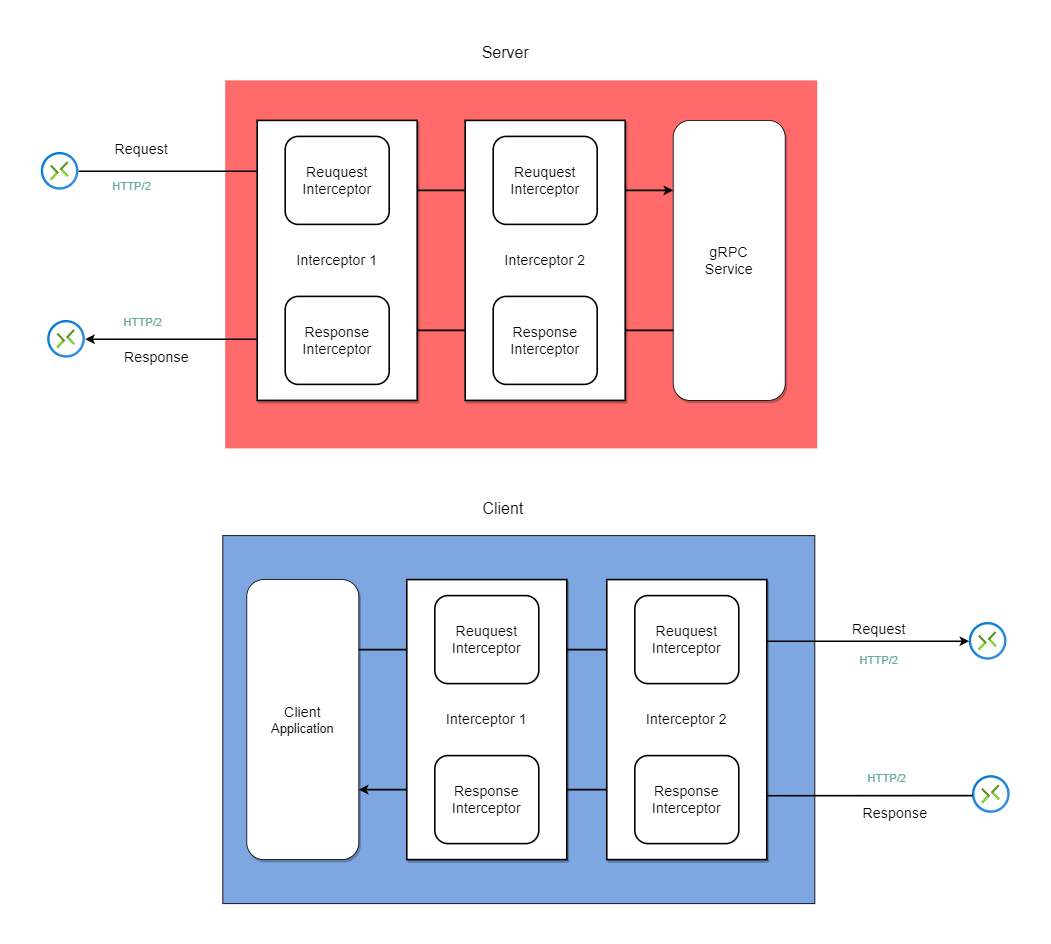

gRPC Interceptor: unary interceptor with code example

April 30, 2022

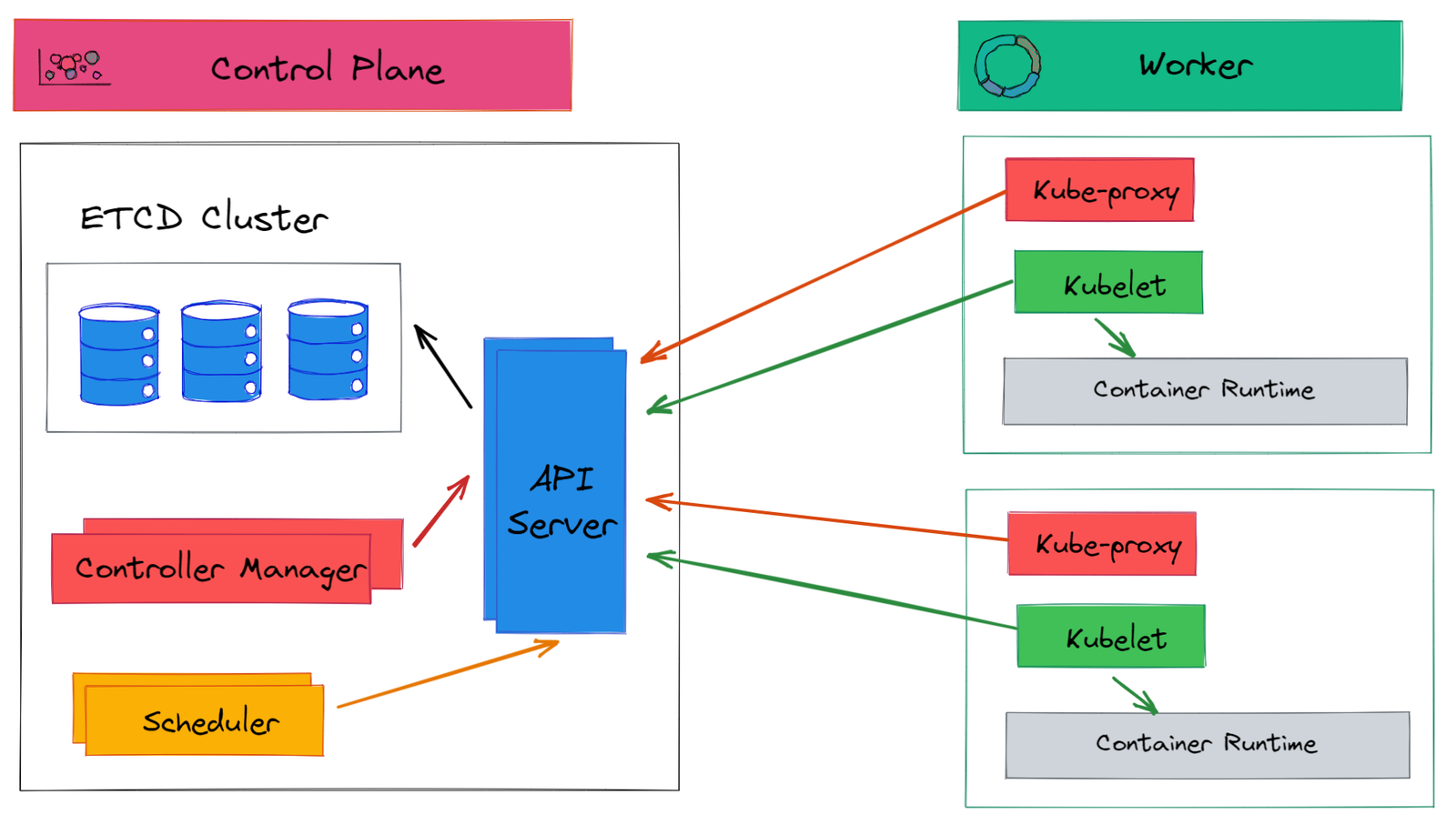

Deploying a RESTful Spring Boot Microservice on Kubernetes

August 18, 2021

Workflow Orchestration with Temporal and Spring Boot

October 29, 2022

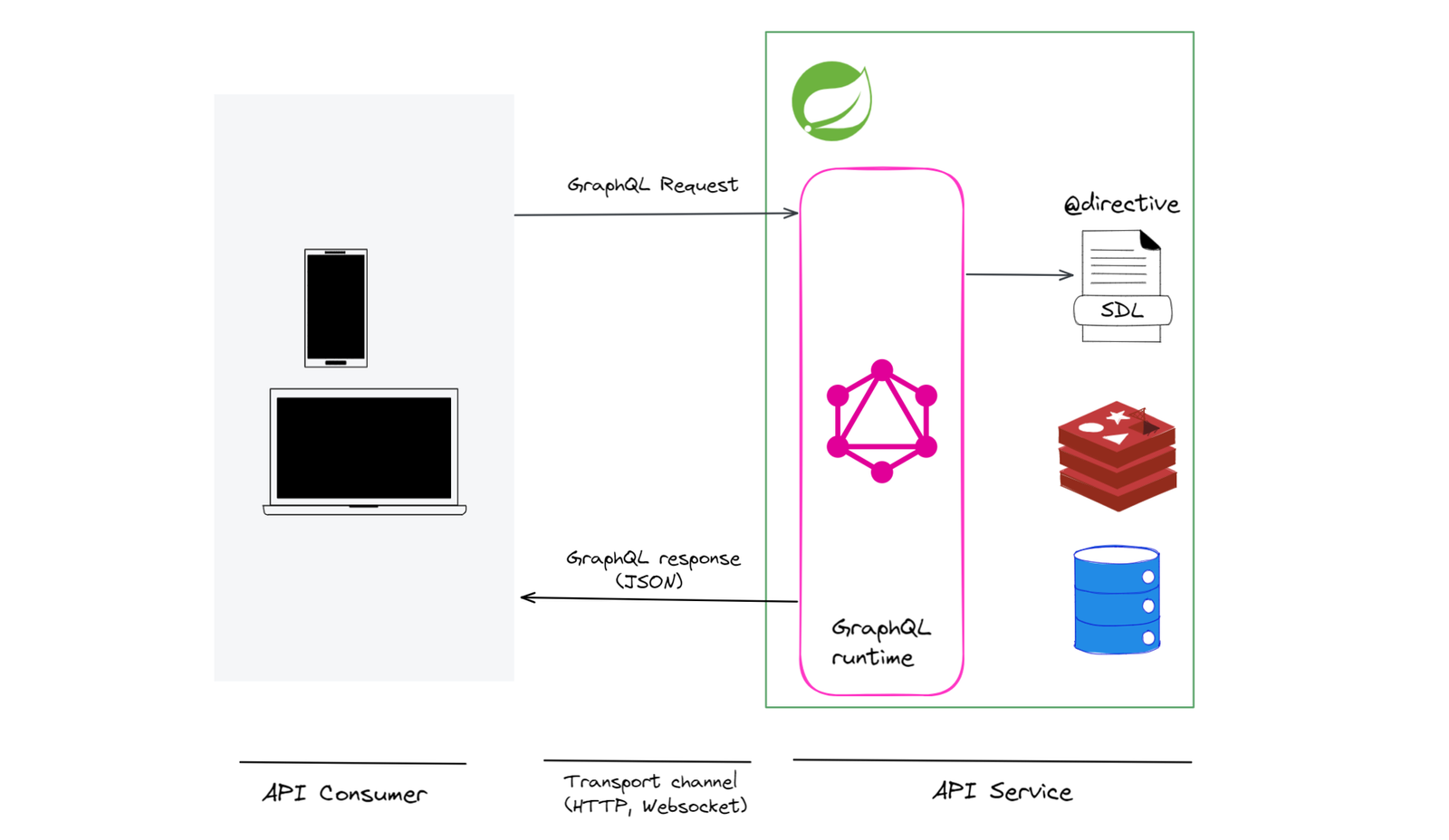

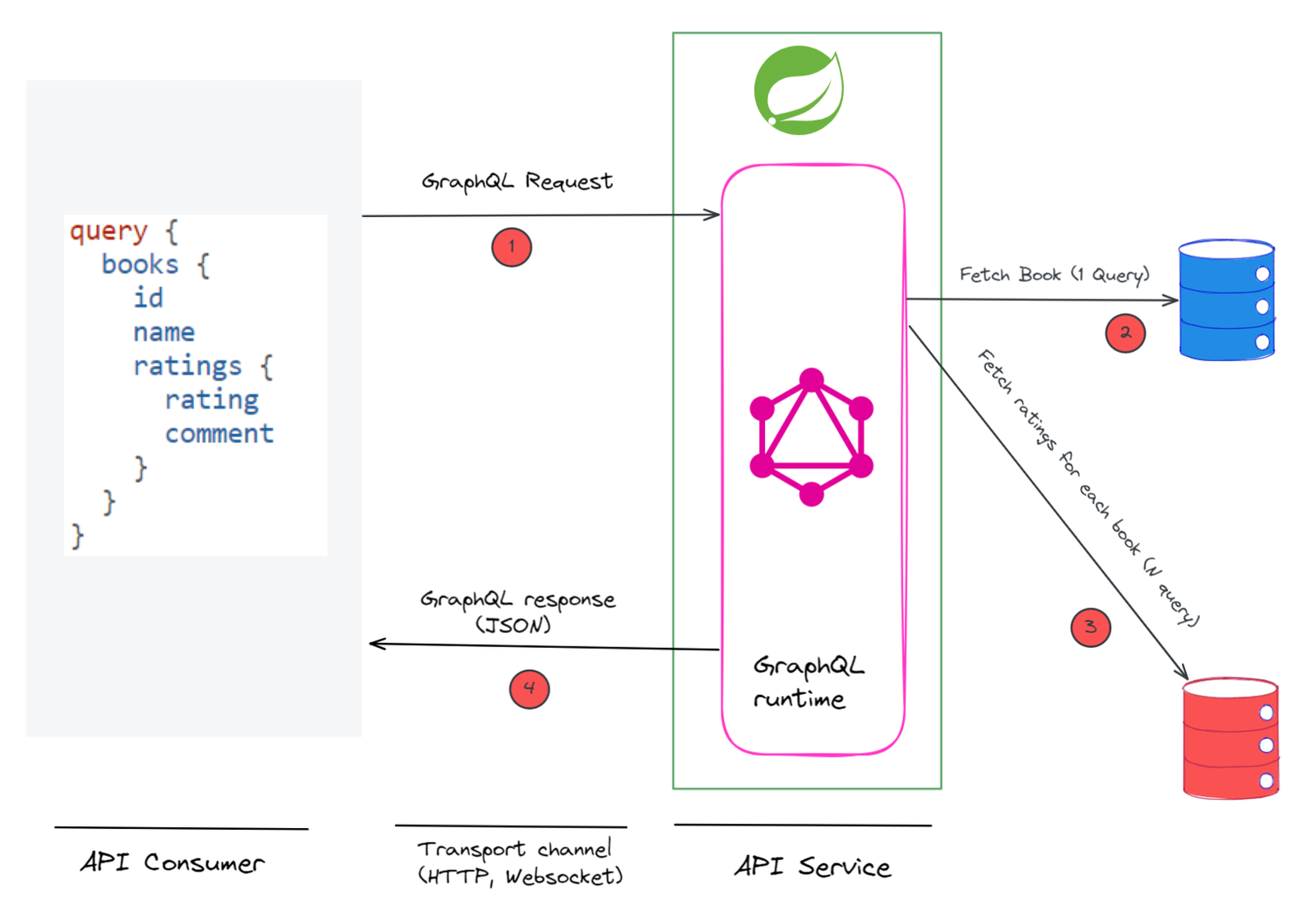

GraphQL Error Handling

August 9, 2023

Spring for GraphQL: Pagination with Code Example

July 26, 2023

Spring for GraphQL: Interfaces and Unions

May 17, 2023

gRPC Bidirectional Streaming with Code Example

February 17, 2023